Here's how it works

We'll guide you to the best governance solution and help you implement it successfully

Browse & Learn

Read our in-depth research guides or chat with our advisors to make informed decisions about AI governance and compliance solutions.

Compare Top Matches

Our comparison tools filter vetted governance vendors based on your specific compliance requirements and organizational needs.

Pick Your Solution

Gain confidence in selecting the right governance solution. We'll help you evaluate vendors and negotiate implementation terms.

Browse all categories

Need guidance? Connect with a

GetAIGovernance Advisor - it's free

Frequently Asked Questions

Everything you need to know about GetAIGovernance

What is AI governance?

AI governance is the set of policies, processes, and tools organizations use to make sure their AI systems operate safely, fairly, and in line with legal requirements. It covers everything from model oversight and bias detection to regulatory compliance and accountability structures.

What signals should I be monitoring in an AI system?

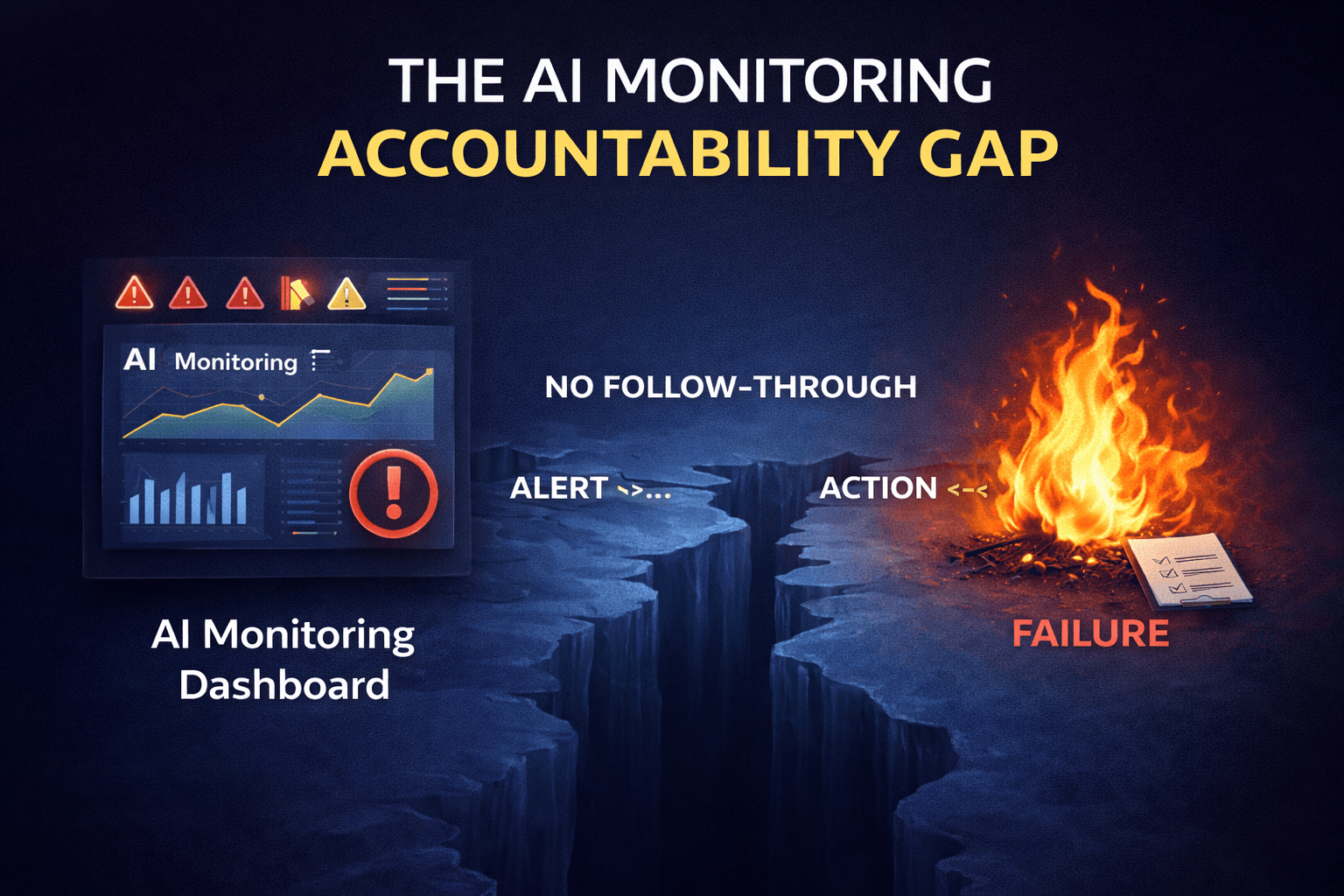

AI monitoring covers twelve distinct signal categories, and no platform covers all of them equally. The core groups are performance signals (accuracy, latency, error rates), data drift signals (whether inputs are changing from what the model was trained on), output quality signals (hallucination rates, toxicity, relevance), cost and resource signals (token usage, API spend), user behavior signals, and system health signals. The right signals depend on your primary risk. If your biggest concern is output accuracy, prioritize performance and output quality monitoring. If you're running autonomous agents with live system access, cost signals and user behavior signals become critical. If you're in a regulated industry, audit trail and pipeline signals matter most. Buying a monitoring platform before identifying which signal gaps you actually have is how teams end up with expensive dashboards nobody acts on.

What is coding agent sprawl and why should enterprises care about it?

Coding agent sprawl is what happens when an engineering organization adopts multiple AI coding tools — Cursor, Claude Code, Codex, and others — without a centralized layer to govern them. Each tool carries its own identity, its own data access permissions, and its own cost footprint, with no coordination between any of them. A developer connecting a coding agent to internal Jira tickets, GitHub repositories, and Confluence docs through an MCP server can accidentally create an agent with more data access than any human developer on the team — and no audit trail to show what it read or acted on. The risks are real: runaway inference costs, ungoverned data access, compliance exposure, and zero visibility for security or finance teams. Organizations need centralized identity, cost controls, and audit logging that cover every coding tool simultaneously, not tool-by-tool.

What is the difference between AI governance and AI security?

AI governance defines the rules, accountability structures, and policies that determine how AI systems should operate. AI security enforces those rules at the moment of execution — blocking harmful actions, filtering dangerous inputs, and preventing data from leaving systems it shouldn't leave. Governance is the policy layer; security is the enforcement layer. A governance framework that says "agents cannot access customer PII without authorization" is useless without a security control that actually stops the agent from accessing that data in the first place. Most organizations need both, and they need them connected. Governance without security is a wish list. Security without governance is enforcement without a clear standard to enforce against.

What is prompt injection and why is it an AI security risk?

Prompt injection is an attack where malicious instructions are hidden inside a user input or connected data source, tricking an AI model into overriding its original instructions. A support ticket with an embedded command, a document with encoded payloads, a database field with rogue instructions — all of them can redirect a model's behavior without triggering any conventional security alert. The risk is serious because AI models are trained to be helpful, which means they follow instructions. A model that reads a poisoned input and complies is doing exactly what it was designed to do. Organizations need input validation and prompt filtering controls sitting directly in the request path — before the model reads anything — to defend against this class of attack.

Trusted insights, updated daily

Exclusive research from our expert analysts.

AI Governance Platforms

AI Governance Platforms

Apr 26, 2026

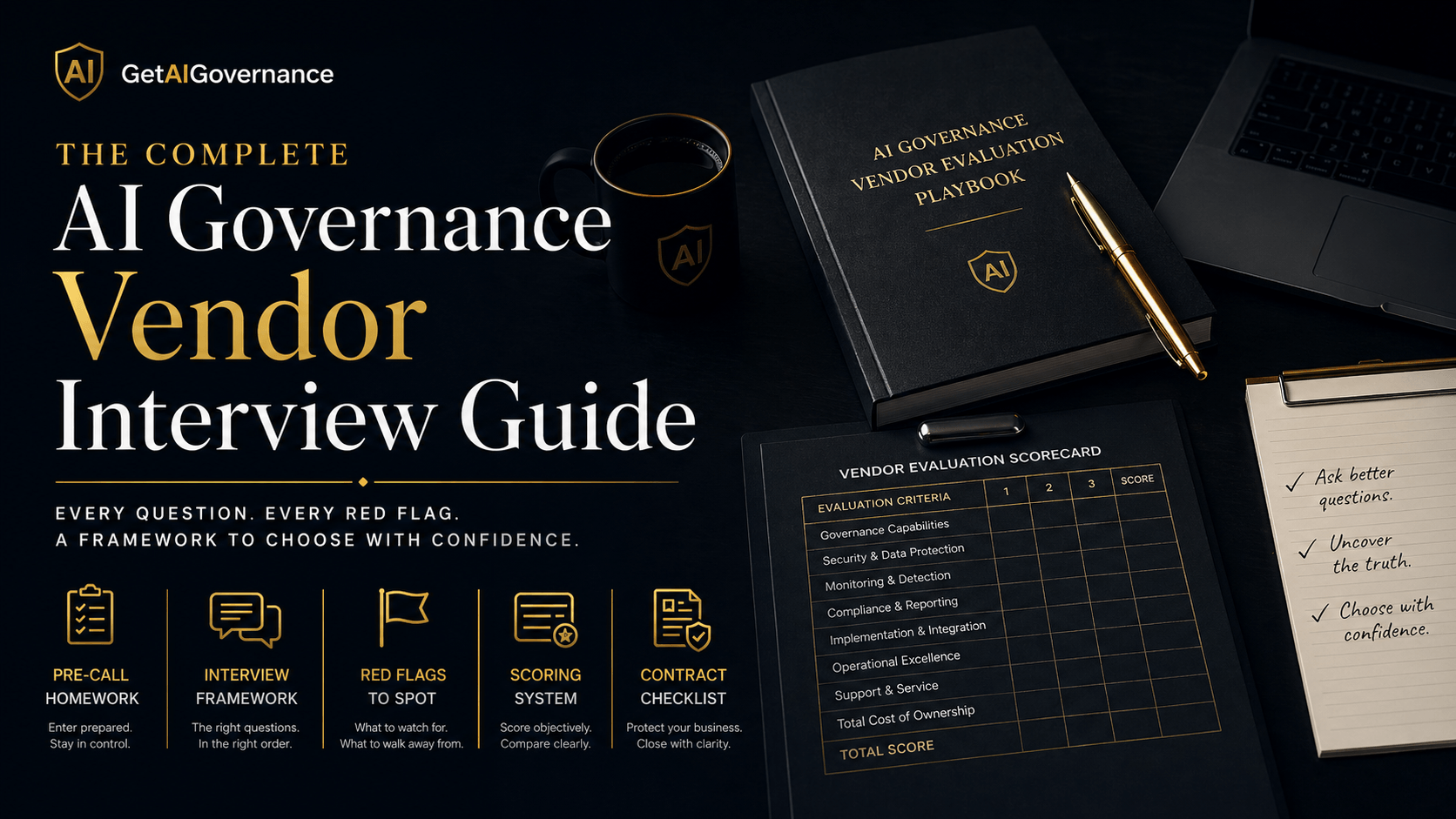

The Complete AI Governance Vendor Interview Guide

Read More AI Risk & Controls

AI Risk & Controls

Apr 23, 2026

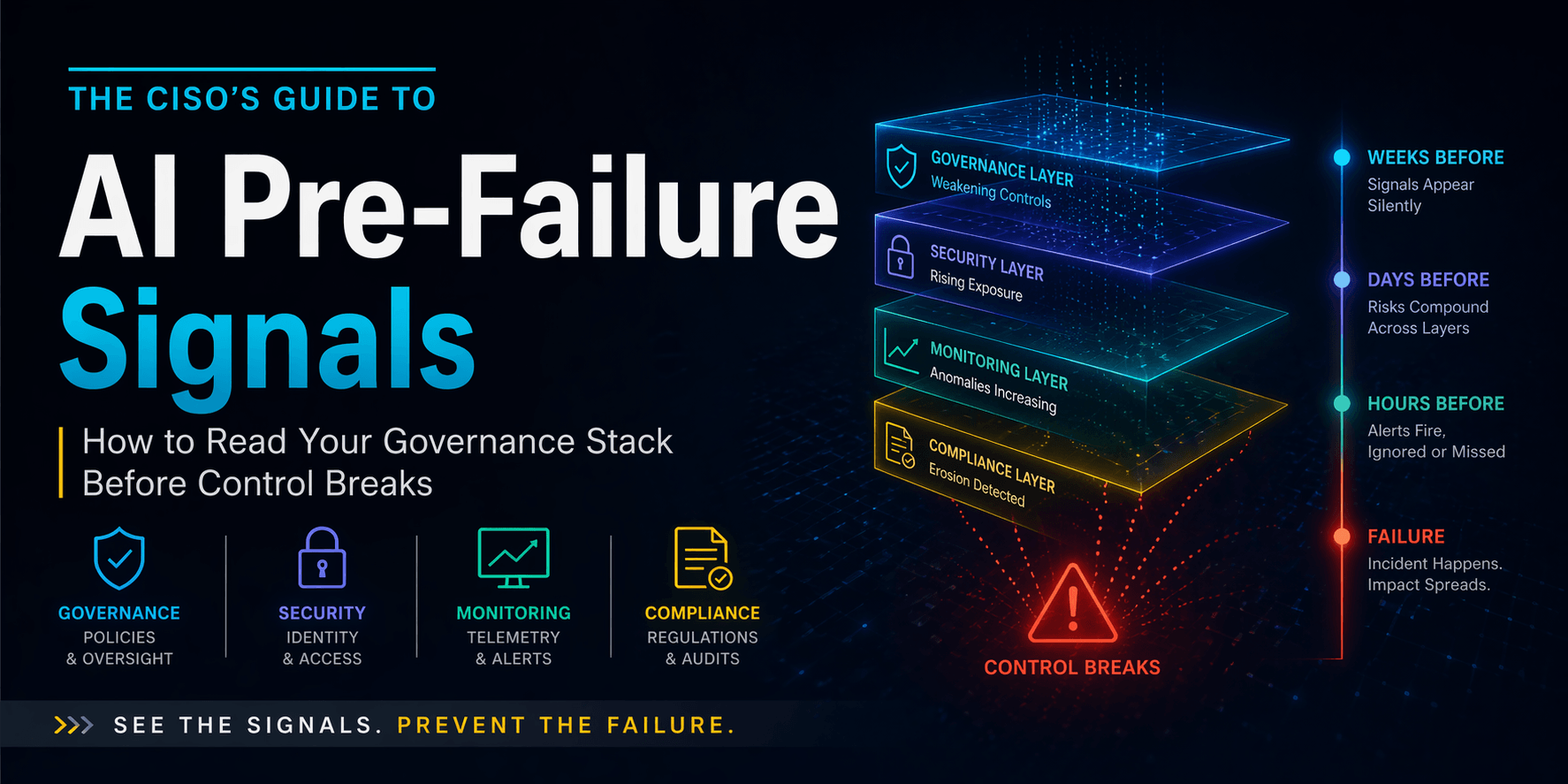

The CISO's Guide to AI Pre-Failure Signals: How to Read Your Governance Stack Before Control Breaks

Read More Model Observability

Model Observability

Apr 20, 2026

Your AI Monitoring Dashboard Is Full of Data Nobody Acts On

Read MoreStay ahead of Industry Trends with our Newsletter

Get expert insights, regulatory updates, and best practices delivered to your inbox